Qualcomm Patents a Video Decoder That Stops Waiting for Data to Arrive in Order

Qualcomm's latest patent targets a surprisingly mundane but costly problem in video decoding: processor cores sitting idle while waiting for the 'right' data to arrive in the 'right' order. The fix is letting the decoder skip ahead and work on whatever data shows up first.

What Qualcomm's out-of-order video decoder actually does

Imagine you're assembling a two-part report, but you're told to always finish Part A before touching Part B — even if Part B is sitting right in front of you and Part A is still stuck in the mail. That's essentially how traditional video decoders work with color information.

When your phone or TV decodes a video, it breaks each frame into two types of data: luma (brightness) and chroma (color). Classic pipelines insist on processing them in a fixed sequence, so if the brightness data is delayed, the hardware stalls even if the color data is ready and waiting.

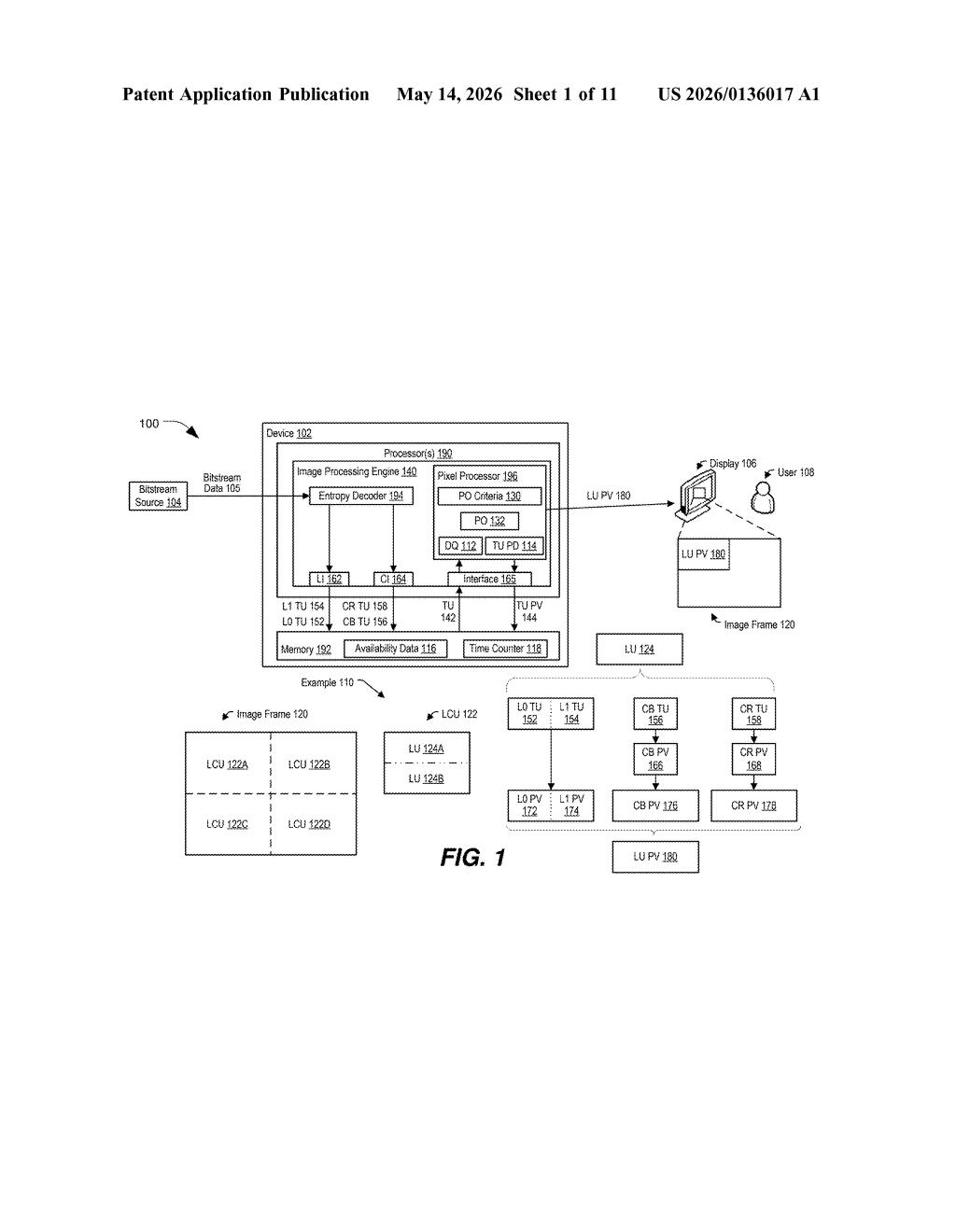

Qualcomm's patent describes a smarter approach: a pixel processor that checks which data — luma or chroma — has arrived from the decoder, and gets to work on whichever one is available first. It's a small scheduling change, but on a chip handling 4K or 8K video, idle cycles add up fast.

How the pixel processor picks chroma over luma on the fly

The patent centers on a pixel processor that sits downstream of an entropy decoder (the stage that decompresses the raw bitstream into structured image data). Normally, a coding unit in a video frame is processed luma-first, chroma-second — in that strict order.

Qualcomm's system introduces availability checks. Before doing any work, the pixel processor asks two questions:

- Is the luma transform unit (TU) — the brightness block — ready from the entropy decoder?

- Is the chroma TU — the color block — ready?

If the chroma TU has arrived but the luma TU hasn't, the processor doesn't wait. It immediately processes chroma to produce chroma pixel values, then circles back to handle luma once it shows up. The logical unit (a grouping of luma and chroma TUs that together represent a region of the frame) is only considered complete once both are done, but the order of internal processing becomes flexible.

This is essentially a lightweight form of out-of-order execution — a technique CPUs have used for decades — applied specifically to video decode pipelines. The memory stores the in-progress image data so the processor can resume luma work without losing context.

What this means for mobile video decode efficiency

Video decode is one of the hardest-worked pipelines on any mobile SoC. Qualcomm's Snapdragon chips power a huge share of Android flagship phones, and any reduction in decode stall cycles translates directly to lower power draw or higher sustainable frame rates — both things users and OEMs care deeply about.

This is also relevant beyond phones. Qualcomm increasingly targets automotive infotainment, XR headsets, and always-on PC platforms like Snapdragon X, all of which do heavy video decoding. A more flexible pipeline that keeps the pixel processor busy rather than idle is exactly the kind of incremental efficiency gain that separates competitive silicon from also-rans.

This isn't a flashy patent — it's a pipeline scheduling optimization buried deep inside chip firmware. But Qualcomm's sustained competitive advantage in mobile video has been built on exactly these kinds of unglamorous micro-optimizations. If this technique ships in a future Snapdragon decoder block, most people will never know it's there, but their battery life will be slightly better because of it.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.